Page switching in mixed-device web surveys: prevalence and data quality

Baier, T. & Fuchs, M. (2020). Page switching in mixed-device web surveys: prevalence and data quality in Survey Methods: Insights from the Field, Special issue: ‘Advancements in Online and Mobile Survey Methods’. Retrieved from https://surveyinsights.org/?p=13446

© the authors 2020. This work is licensed under a Creative Commons Attribution 4.0 International License (CC BY 4.0)

Abstract

As a self-administered survey mode, web surveys allow respondents to temporarily leave the survey page and switch to another web page in a different browser tab or to another window/app. This form of sequential multitasking has the potential to disrupt the response process and reduce data quality if respondents become distracted (Krosnick, 1991; Sendelbah et al., 2016). Browser data indicating respondents leaving the survey page allow non-reactive measurement of their multitasking. We investigated the prevalence of page switching, number of switching events and time spent absent per event with respect to respondents’ characteristics and devices used. Furthermore, we analysed the association with data quality (item missing, differentiation in grid questions and number of characters to open-ended questions). The results indicate that the prevalence of page switching is relatively low and the durations of page switching events are rather short. Also, respondents using a PC/tablet are more likely to leave the survey page than those using a smartphone. As to data quality, we did not find any correlation between page switching and the quality of the answers. Thus, this study provides no evidence that multitasking poses a threat to data quality. The findings are discussed with respect to the delimitations of multitasking using browser paradata.

Keywords

data quality, mixed-device, mobile web surveys, multitasking, paradata

Copyright

© the authors 2020. This work is licensed under a Creative Commons Attribution 4.0 International License (CC BY 4.0)

Introduction

As web surveys are self-administered mode, respondents can easily multitask; they are free to engage in other activities and temporarily neglect the survey while doing so (Zwarun & Hall, 2014). Multitasking can take the form of simultaneous or sequential activities; that is, respondents can perform tasks simultaneously or switch from one task to another sequentially (Salvucci & Taatgen, 2010). In real-world survey settings, multitasking often occurs as a combination of both forms. A survey participant, for example, might be engaged in a simultaneous activity (e.g., listening to music) or a sequential one (e.g., checking mails). Another way to classify forms of multitasking in the context of surveys is to differentiate between secondary environmental (e.g., listening to music in the background), non-media (e.g., having a conversation) and media activities (e.g., watching TV or checking mails) (Zwarun & Hall, 2014). Respondents can be involved in secondary environmental activities that do not require them to temporarily leave the survey, but this aspect can affect the final outcome, as such involvement might be detrimental to their attentiveness. Secondary non-media and media activities, however, require switching fully to another task.

When respondents are involved in secondary activities, all four stages of the question-answer process (Tourangeau, Rips, & Rasinski, 2000) are potentially affected: incomplete processing of the question can be detrimental to comprehension, respondents might not sufficiently engage in retrieving information and its subsequent judgement, and the formatting of the answer might be suboptimal (Kennedy, 2010). Accordingly, multitasking is considered to be a form of distraction, potentially leading to an insufficient response process (Krosnick, 1991) and causing a negative impact on data quality.

Multitasking in survey research has mostly been studied using participants’ self-reports on secondary activities they were engaged in while taking the survey. In telephone surveys, 46% (Aizpurua, Heiden, Park, Wittrock, & Losch, 2018) and 51% (Lavrakas, Tompson, Benford, & Fleury, 2010) of respondents reported some form of multitasking. As to web surveys, 30% of respondents reported multitasking in an online panel study (Zwarun & Hall, 2014). The findings concerning detrimental effects of multitasking on data quality are inconsistent: Kennedy (2010) showed that question comprehension in mobile phone surveys was negatively affected when respondents were eating and drinking during the survey. Yet, no negative effects of other behaviours performed during multitasking episodes were evident. Apart from one knowledge question in a dual-frame telephone survey (Aizpurua et al., 2018), multitasking respondents did not produce lower data quality when answering questions.

These inconsistent findings might be partly attributed to the potentially low reliability of self-reports regarding multitasking (Lottridge, Marschner, Wang, Romanovsky, & Nass, 2012). In recent years, methods have evolved to allow researchers to measure multitasking non-reactively, that is, without asking respondents about their behaviours. These new methods rely on paradata, which arise as a result of by-product process data collected at the respondent level (Callegaro, 2013) and allow researchers to investigate respondents’ behaviour unobtrusively and non-reactively (Couper, 2000). In the context of web surveys, paradata can provide information on the response process, such as the device used, response times or answer changes. Web surveys allow researchers to easily obtain this auxiliary information, as data collection is computer-assisted. For example, data generated by the browser provide researchers with insights regarding respondents’ behaviours without direct questioning. This method is particularly valuable to investigate on-device multitasking, since browser data can be used to track whether respondents interrupt answering the survey and are temporarily engaged in on-device activity by switching from the survey page to another tab or window.

Sendelbah, Vehovar, Slavec, and Petrovcic (2016) used paradata on the inactivity of the survey page to measure multitasking in a web survey (PC only). Their results indicate that 40% of the respondents left the survey page at least once, the mean of absence from the survey amounting to 86 seconds. No detrimental effect was observed on differentiation in grid questions. However, leaving the survey was associated with a higher item non-response. Another study among PC respondents found the same association of page switching (as measured by paradata) and item non-response (Höhne & Schlosser, 2018). Höhne and colleagues (2020) measured the inactivity of the survey page with paradata to investigate on-device multitasking both among PC and smartphone respondents. Remarkable device differences were found, wherein 14.8% of the PC respondents left the survey at least once, significantly more often than the smartphone respondents (9.3%). The PC respondents were also found to leave the survey more often (2.5 times on average) and for a longer time (161.3 seconds on average) than their smartphone counterparts (1.5 times and 105.4 seconds, respectively). On-device multitasking on pages with multiple items was significantly associated with choosing the middle category more often (middle response style). However, no associations were uncovered between the respondents leaving the survey and the extreme response style and item non-response.

The aim of this study is to contribute to the investigation of on-device multitasking by analysing respondents’ page switching behaviours. We will focus on the prevalence, frequency and duration of page switching in web surveys. In addition, we aim to compare these indicators for PC/tablet and smartphone respondents. We expect smartphone respondents to be less likely to leave the survey page, since page switching on a small screen using a mobile browser and touch input is assumed to be more burdensome than that on a larger screen and when using a PC/tablet keyboard with a mouse and/or touchpad. As mentioned above, we assume that page switching has the potential to negatively affect all stages of the response process. Accordingly, page switching respondents are expected to produce lower-quality survey answers.

When investigating page switching respondents, one has to decide how to identify this group and delimit their behaviour. We first discuss the definition and measurement of respondent on-device multitasking. Next, we report results from three studies in which we measured the prevalence as well as the duration of page switching. Prevalence rates in this work are analysed with respect to respondents’ characteristics and the device used. Finally, we test the association of multitasking and data quality (item non-response, differentiation in grid questions and answers to open-ended questions).

Data and methods

Data

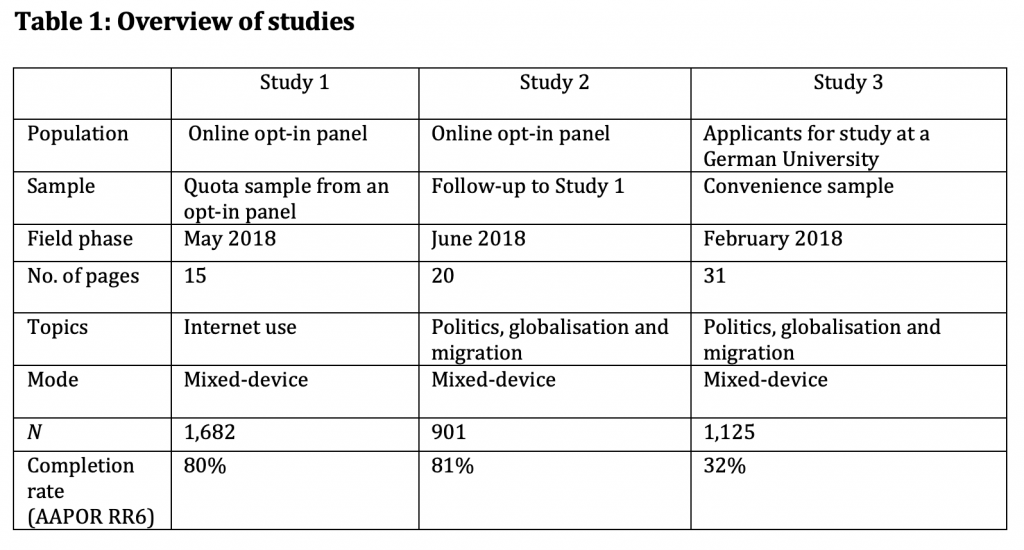

We used data from three studies conducted in 2018 (see Table 1). The data of Study 1 were collected in Germany through the opt-in online panel called respondi (https://www.respondi.com), with cross quotas for ages 18–29, 30–39, 40–49, 50–59, and 60+ years, and gender and independent quotas for education (low, medium and high). The gross sample of Study 1 was expected to match these demographic characteristics of the general population in Germany. The questions covered Internet use and related topics. Study 2 was administered as a second wave to the respondents of Study 1. Unit non-response in the second wave did not change the sample composition with respect to gender, age and education. The respondents in the second wave were randomly assigned to the same device they used in the first wave (PC/tablet or smartphone) or to the other device. As a result, the second wave contained considerably more smartphone respondents (45%) than the first wave (24%). As the rate of the respondents who did not comply with this assignment was about 25%, the groups of PC/tablet users and smartphone users were not treated as they would have been under randomised conditions; rather, they were considered as self-selected groups. The questionnaire of Study 2 included topics on politics, globalisation and migration. Study 3 consists of a convenience sample among university applicants who applied to study at the Darmstadt University of Technology. The questionnaire covered topics on politics, globalisation and migration. All three studies were mixed-device studies and applied optimised design for smartphone respondents, so that grid questions were displayed as vertical stand-alone items on a small screen to avoid horizontal scrolling (see Figure 1 in the Appendix). The respondents self-selected the device used for answering the questions. In Studies 2 and 3, respondents were assigned a device but often chose another device.

Methods

To detect page switching, the Java-based script Embedded Client Side Paradata (ECSP) (Schlosser & Höhne, 2018) was implemented using the online survey software Unipark. ECSP logs the inactivity of a respondent on the survey page together with the submission, and stores timestamps for the time absent from the survey page.

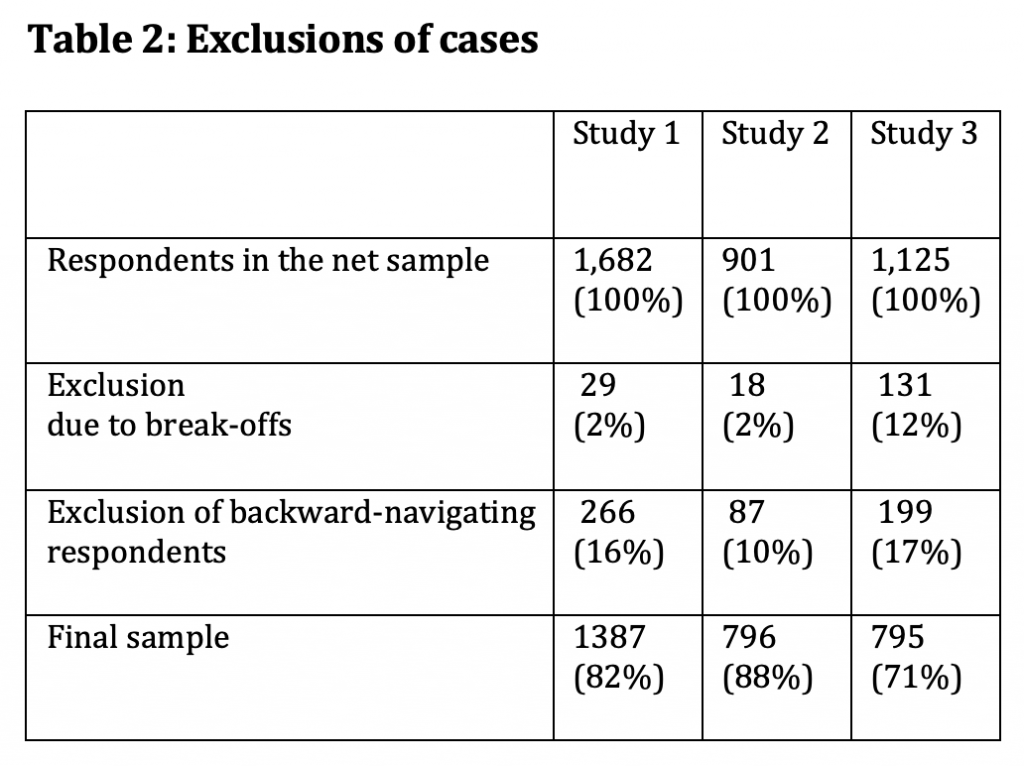

In order to maintain comparable groups of respondents across studies and survey pages, we used the following exclusion criteria: (1) We excluded the respondents who did not complete the questionnaire. The inclusion of the respondents who broke off would bias the results, as they have less opportunity to commit page switching compared to respondents who completed the questionnaire, because the former would have visited fewer survey pages. (2) We excluded the respondents who navigated backwards in the questionnaire. Although the respondents were not offered a back button in the survey, navigation in the questionnaire using the browser’s back button and submitting a page once more was possible in all three studies. The ECSP tool collected client-side browser data when the page was submitted by the respondents. Thus, when the respondents went back to a previous survey page and submitted it again, the original paradata were overwritten.

The exclusion criteria for the respondents reduced the samples available for analysis by 12—29% (see Table 2). The two excluded groups in the three studies contributed to this overall loss of cases to different extents. While break-off was negligible in Studies 1 and 2, a considerably higher percentage of respondents did not complete the questionnaire in Study 3. With regard to backward navigation, remarkably fewer respondents had to be excluded in Study 2 than in the other two studies.

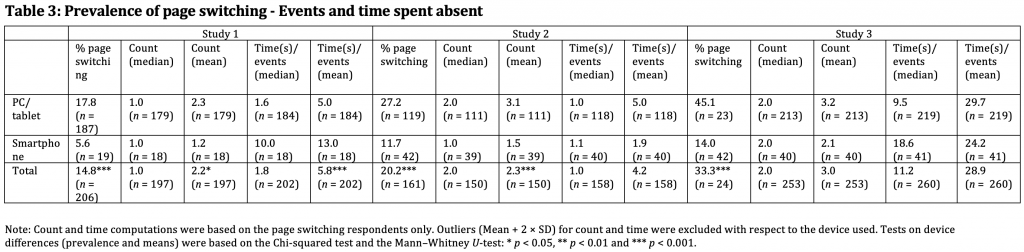

Page switching was included in the analysis as a dichotomous variable (page switching occurred at least once in the questionnaire vs. no page switching). In addition, two continuous variables (count of page switching events and number of seconds the respondent was absent per page switching event) were computed for the page switching respondents only. Given the highly skewed distributions of the continuous variables, we excluded switching counts and times that exceeded the mean plus two times the standard deviation (SD) separately for the PC/tablet respondents and the smartphone respondents for descriptive and regression analyses.

In order to assess the consequences of page switching on data quality, we used the following indicators: item non-response, degree of differentiation in the matrix questions and lengths of responses to open-ended questions. Other indicators were not consistently available in all three studies. Due to relatively low item non-response rates in all the studies and highly skewed distributions, we did not treat item missing as a continuous variable; instead, we considered it as a dichotomous variable, with 0 indicating no item missing and 1 indicating any item missing in the questionnaire. For the degree of differentiation, we computed an index indicating the mean over the degree of differentiation over all grid questions in the questionnaire (McCarty & Shrum, 2000). In the rare case of missing items in a grid question, the degree of differentiation was computed based on the number of available items. The lengths of the answers to the open-ended questions were measured by the number of characters. Non-substantive answers were coded as zero, and answers that exceeded the mean number of characters plus two times the SD separately for PC/tablet respondents and smartphone respondents were excluded.

When comparing PC/tablet and smartphone respondents concerning page switching, we compensated for the self-selection effect of the respondents choosing their device for answering the questionnaire. To compensate for a potential bias, we included the following background variables as control variables: age, gender and education. As all studies contained information on schooling but no information on tertiary education, a variable on education was coded as a dichotomous variable denoting lower secondary education (German Hauptschule and Realschule) and upper secondary education (German Abitur).

Results

Prevalence of page switching and time spent absent

The prevalence of respondents leaving the survey page at least once during the survey ranged from 11% in Study 1 to 33% in Study 3 (Table 3). The lower percentages in the samples from the opt-in panel (Studies 1 and 2) were presumably at least in part due to the participants being experienced respondents who earned rewards for their participation. The findings on the number of page switching events and time per page switching event spent absent were based on the page switching respondents only. Even after excluding outliers, the distributions of the latter variables were skewed, with the medians being substantially lower than the means. The number of page switching events among the page switching respondents did not exceed 3.2 (mean) and 2 (median) in any of the studies. Comparing the lengths of the studies, respondents exposed to the longer questionnaires of Studies 2 and 3 exhibited more page switching events. On average, page-switching respondents left the survey after 13.1 (Study 1), 7.5 (Study 2) and 8.2 (Study 3) survey pages. In all the studies, the smartphone respondents were less than half as likely to commit page switching as compared to those using a PC/tablet to answer the questions (Table 3). As to the count and time variable, however, we found no consistent pattern across devices.

Respondents’ characteristics and device differences in page switching

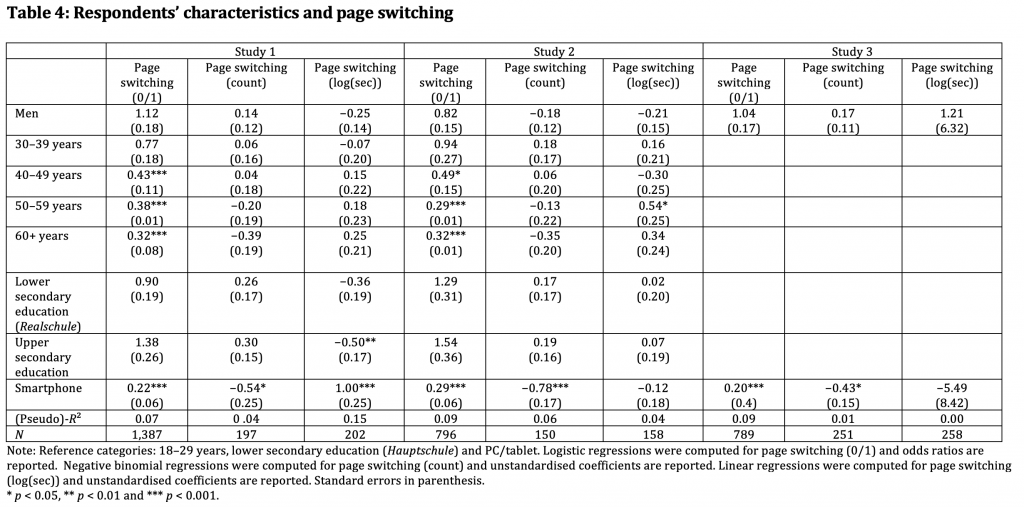

The effect of the respondents choosing their device for answering the questions needs to be considered when analysing differences in the prevalence, frequencies and lengths of page switching of smartphone respondents vs. PC/tablet respondents. The studies used in this work offer only a limited set of variables, namely education, age and gender, to control for any potential self-selection bias. Logistic regression was fitted with the dependent variable (0/1) for page switching. For the page switching respondents, negative binomial regression was fitted for the switching count, and linear regression was fitted for the log of seconds absent. In addition to the device used (PC/tablet vs. smartphone), gender, age and education were introduced as control variables (see Table 4). For Study 3 (the sample of university applicants), only gender was used, as all the applicants had the same educational level and mostly belonged to the same age category (only respondents with a high school diploma or equivalent; mean age = 21.9 years, SD of age = 3.0 years).

Confirming our expectations, the smartphone respondents yielded a lower prevalence and frequency of page switching in all three studies even after controlling for socio-demographic background variables. As to the time spent absent per page-switching event, however, the smartphone respondents in Study 1 exhibited a longer duration. Even though age, gender and education were predominately introduced as the control variables, the analysis allowed us to identify groups of respondents who were more prone to page switching. The results indicated that respondents aged 40 and older yielded a lower prevalence of page switching compared to respondents of 18—29 years, although significantly lower frequency was not observed. As to the length of page switching, only respondents aged 50 to 59 years in Study 2 showed a longer time spent absent. Overall, education and gender did not exert any consistent effects on the prevalence, frequency and length of page switching. The overall low fit of the models indicates that variables other than socio-demographics and the device used (e.g., the situational context of survey taking) might possibly explain page switching.

Page switching and data quality

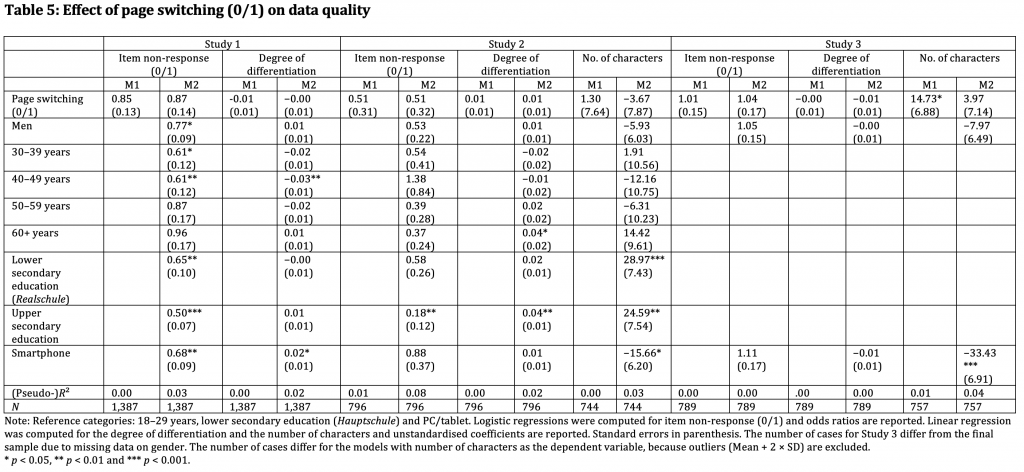

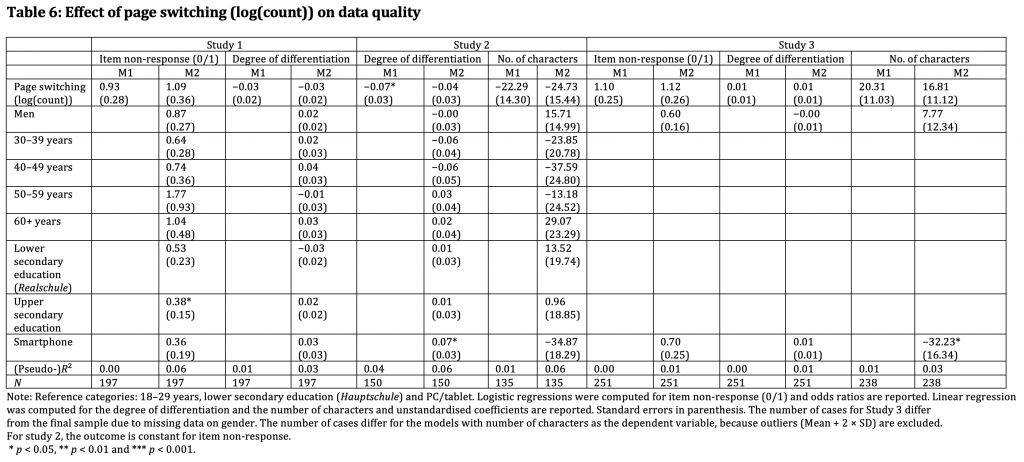

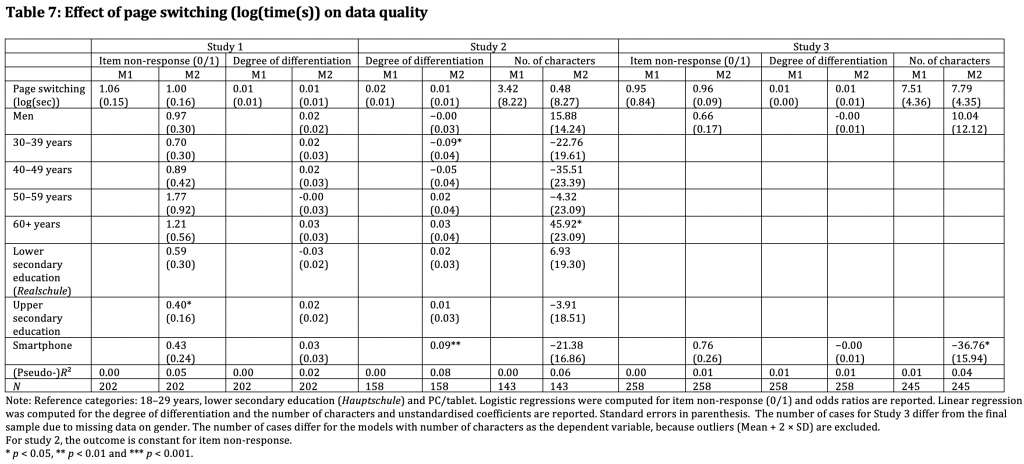

Logistic and linear regression models were computed to investigate the association between page switching and data quality. We regressed item non-response (dichotomous variable: no item non-response in the questionnaire vs. at least one item missing), mean degree of differentiation in the grid questions and the mean length of answers to open-ended questions (number of characters) on the prevalence, frequency and duration of page switching. The first model (M1) for each study (Tables 5–7) and the data quality indicator included only the page switching variable. The control variables of gender, age, education and device used were added to the second model (M2). As in the analysis described above, the models featuring the number of page-switching events and time absent per event included only page-switching respondents and excluded outliers for count and time, respectively. Furthermore, the models for the length of answers to open-ended questions excluded outliers based on the number of characters. Outliers were always excluded using the mean plus two times the SD as the threshold.

The results indicate that the prevalence of page switching typically had no effect on data quality regardless of the quality indicator. Neither the simple models M1 without the control variables nor the more complex models M2 with these variables yielded statistically significant effects (Table 5). Similarly, the frequency of page switching also showed no significant effects on the three data quality indicators (Table 6). The negative association of the number of page-switching events and the degree of differentiation for Study 2 in M1 disappeared in M2 after the control variables were added. As the coefficient of using a smartphone for the survey on the degree of differentiation is significant and positive, the effect was likely driven by the PC/tablet users producing higher non-differentiation and a higher number of page-switching events than the smartphone respondents (who were presented stand-alone items). Finally, note that no analysis could be run on item missing and the count variable for Study 2 due to a constant outcome variable remaining in the sample after the exclusion of break-offs and navigators (no item missing).

Finally, we assessed the effects of the duration of page switching on the three data quality indicators (Table 7). The results typically yielded no statistically significant effects of the duration of the page switching events on the quality of the data provided throughout the questionnaire. The only exception concerned the number of characters in the open-ended questions in Study 3, which showed a positive association between page switching (0/1) and the number of characters. However, controlling for device renders this effect insignificant, because the PC/tablet respondents who produced more characters were also more likely to leave the survey page. Again, the models on item missing for Study 2 could not be computed due to a constant outcome variable.

Summary and discussion

In this study, we investigated respondents’ on-device multitasking using client-side browser data (Schlosser & Höhne, 2018). Using two waves of a survey conducted in an opt-in panel and a survey among former university applicants, we analysed the prevalence of respondents leaving the survey page. In addition, we assessed the frequency and duration of this behaviour. Page switching was analysed depending on the device used (PC/tablet vs. smartphone). We also addressed the question of whether the page-switching respondents produced lower data quality.

Our results indicated that the majority of the respondents did not leave the survey page at any time before completing the survey, suggesting that on-device multitasking is a rather infrequent phenomenon, especially among smartphone users. However, the respondents that switched to another web page or tab exhibited this behaviour more often than once in a survey. The results also indicate that the switching respondents changed to other windows or tabs more often in longer surveys. With respect to the consequences of page switching, the current study suggests that survey researchers need not be concerned about respondents temporarily leaving the survey and correct for or take countermeasures against page switching.

However, these findings might be at least partly attributed to our definition of on-device multitasking. Considering the number of page-switching events and time absent per event among the page-switching respondents, the results indicate that page switching is quite heterogeneous, given the long-tailed distribution with only a few respondents leaving the survey page often and/or for a longer time. Accordingly, it is debatable which page-switching events should be counted as multitasking based on the time absent from the survey page. In our analysis, we did not set any time threshold for a page-switching event to be considered as potentially detrimental to the response process. However, very brief page-switching events of under one or two seconds might be unintentional behaviour (unintentional clicks) or merely an indication of re-arranging tabs and windows on the screen, and should therefore not be interpreted as substantial multitasking. Furthermore, events under a certain threshold of a few seconds might not be long enough to interfere with the response process in terms of attention and working memory. By contrast, longer interruptions caused by e-mails, text messages or WhatsApp messages displayed as pop-ups on top of the survey page have the potential to disturb the response process considerably. Given this background, future research should investigate whether the correlation of page switching and data quality is affected by (arbitrarily chosen) time thresholds. Furthermore, it is also important to consider the causes of page-switching events.

When interpreting these results, one must keep in mind several limitations of the data and measurements of multitasking via page switching. The analysis did not rely on probability-based samples of the general population. Instead, we used an opt-in panel with quota sampling (Studies 1 and 2) and a convenience sample of university applicants (Study 3). As our results are based on experienced, incentivised and presumably motivated respondents, the prevalence of page switching might be lower in our samples as compared to the general population. In addition, the impact of page switching on data quality might be more severe in a less motivated general population sample. The sample reductions (break-offs and respondents navigating backwards throughout the survey) resulted in increased standard errors. If a small effect of page switching on data quality exists, we might not have been able to detect it due to insufficient sample size. It is also important to note that we used a rather limited set of background variables to control for self-selection of the device used, and our set of data quality indicators was limited. Further analysis should rely on a broader set of variables when controlling for self-selection of the device and when assessing data quality.

This research analysed page switching at the questionnaire level. However, to study the impacts of the characteristics of each survey page, one should assess multitasking and its impact on data quality on the page level considering the question type or the number of items per page (Höhne, Schlosser, Couper, & Blom, 2020). Page switching might only affect data quality on the same page (or the following one(s)), and such effects would not show up in the overall analysis. Therefore, a page-wise approach to address page switching and data quality would allow us to investigate the assumption that multitasking might even give general relief from the response burden, so that respondents can provide better quality upon return to the survey. This speculation is based on a study which reported that respondents who self-reported distractions during a survey were more likely to produce high-quality data (Roßmann, 2017).

Finally, one must note that browser-based paradata only allow detection of on-device multitasking and do not capture whether respondents engage in other off-device activities. In order to obtain the full picture, we propose using both self-reports and non-reactive paradata in future studies.

Appendix

References

- Aizpurua, E., Heiden, E. O., Park, K. H., Wittrock, J., & Losch, M. E. (2018). Investigating respondent multitasking and distraction using self-reports and interviewers’ observations in a dual-frame telephone survey. Survey Insights: Methods from the Field, 1-10.

- Callegaro, Mario (2013): Paradata in Web Surveys. In: Kreuter, Frauke (Hg.): Improving Surveys with Paradata: Analytic Uses of Process Information. Hoboken, NJ: Wiley, 261-276

- Couper, M. P. (2000). Web surveys. A review of issues and approaches. Public Opinion Quarterly, 64, 464-494.

- Höhne, J. K., & Schlosser, S. (2018). Investigating the adequacy of response time outlier definitions in computer-based web surveys using paradata SurveyFocus. Social Science Computer Review, 36(3), 369-378.

- Höhne, J. K., Schlosser, S., Couper, M. P., & Blom, A. G. (2020). Switching away: Exploring on-device media multitasking in web surveys. Computers in Human Behavior, 106417.

- Kennedy, C. K. (2010). Nonresponse and measurement error in mobile phone surveys. (Doctor of Philosophy), University of Michigan, Michigan.

- Krosnick, J. A. (1991). Response strategies for coping with the cognitive demands of attitude measures in surveys. Applied Cognitive Psychology, 5(3), 213-236.

- Lavrakas, P. J., Tompson, T. N., Benford, R., & Fleury, C. (2010). Investigating data quality in cell phone surveying. Paper presented at the annual American Association for Public Opinion Research conference, Chicago, Illinois.

- Lottridge, D., Marschner, E., Wang, E., Romanovsky, M., & Nass, C. (2012). Browser Design Impacts Multitasking. Proceedings of the Human Factors and Ergonomics Society Annual Meeting, 56(1), 1957-1961.

- McCarty, J. A., & Shrum, L. J. (2000). The measurement of personal values in survey research: A test of alternative rating procedures. Public Opinion Quarterly, 64(3), 271-298.

- Roßmann, J. (2017). Satisficing in Befragungen: Theorie, Messung und Erklärung: Springer-Verlag.

- Salvucci, D. D., & Taatgen, N. A. (2010). The multitasking mind: Oxford University Press.

- Schlosser, S., & Höhne, J. K. (2018). ECSP – Embedded Client Side Paradata. Zenodo, 1-23.

- Sendelbah, A., Vehovar, V., Slavec, A., & Petrovcic, A. (2016). Investigating respondent multitasking in web surveys using paradata. Computers in Human Behavior, 55, 777-787.

- Tourangeau, R., Rips, L. J., & Rasinski, K. (2000). The psychology of survey response: Cambridge University Press.

- Zwarun, L., & Hall, A. (2014). What’s going on? Age, distraction, and multitasking during online survey taking. Computers in Human Behavior, 41, 236-244.